1. Introduction

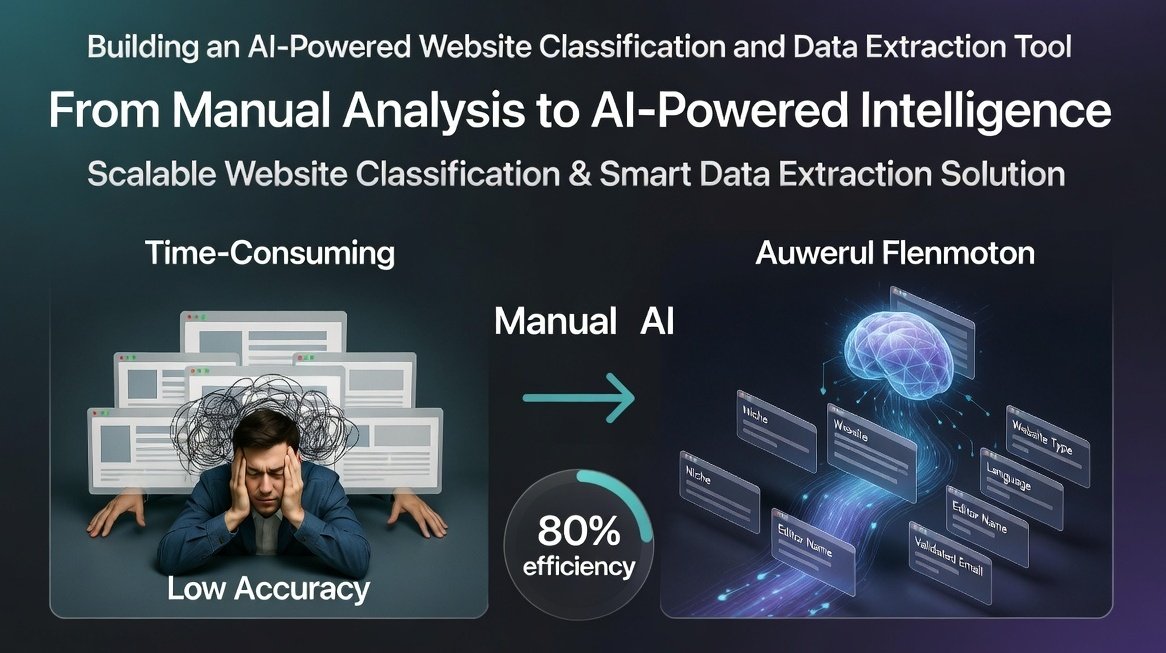

In today’s data-driven digital ecosystem, businesses often rely on large volumes of website data for market research, lead generation, and strategic decision-making. However, manually analyzing websites to extract relevant information such as niche, type, language, and contact details is both time-consuming and inefficient.

This case study highlights how CnEl India Private Limited developed a scalable AI-powered website classification and data extraction tool designed to process hundreds to thousands of websites in bulk. The solution enabled accurate categorization, intelligent data extraction, and structured output, significantly improving efficiency and data usability for the client.

2. Client Background

The client is a digital marketing and research-focused organization that frequently analyzes large sets of websites for outreach, partnership opportunities, and content analysis. Their workflows required collecting and organizing key information from multiple websites, including niche classification and contact details.

Previously, this process was performed manually or using basic automation methods, resulting in inconsistencies, low accuracy, and limited scalability.

3. Challenges Faced

3.1 Manual Data Collection

The client’s team spent significant time visiting each website individually to gather information, leading to inefficiencies and delays.

3.2 Lack of Accurate Classification

Existing methods struggled to correctly identify the primary niche or category of websites, especially when content was complex or multi-topic.

3.3 Difficulty in Extracting Contact Information

Finding accurate editor or contact email addresses was challenging due to inconsistent website structures.

3.4 Scalability Issues

The client needed to process between 500 to 1,000 websites per batch, which was not feasible with manual or semi-automated approaches.

3.5 Data Organization

Collected data often lacked structure, making it difficult to use for further analysis or outreach campaigns.

-

Project Objectives

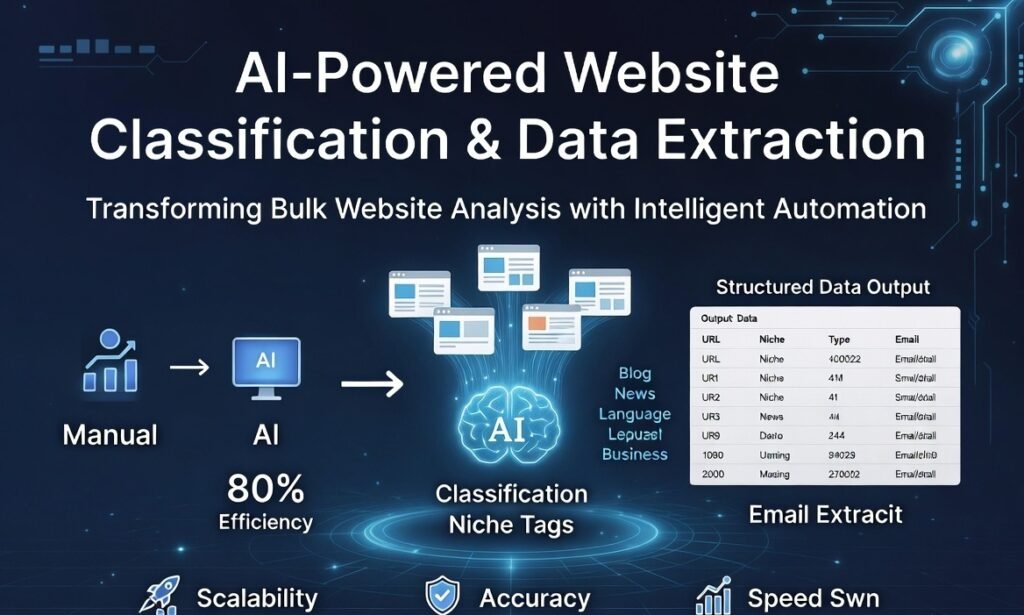

The primary goal of this project was to design and develop an intelligent system capable of:

- Processing large batches of websites efficiently

- Identifying the primary niche or topic of each website

- Classifying websites by type (such as blog, news, or business)

- Detecting the language used on the website

- Extracting editor or author names where available

- Identifying and validating contact email addresses

- Delivering structured output in a usable format

5. Our Approach

5.1 Requirement Analysis

CnEl India Private Limited began by understanding the client’s workflow, data requirements, and expected output format. This helped define clear success criteria for accuracy, scalability, and usability.

5.2 System Architecture Design

We designed a modular and scalable architecture that could handle bulk processing efficiently. The system was structured to perform multiple tasks in sequence:

- Website content retrieval

- Data parsing and cleaning

- AI-based classification

- Contact information extraction

- Data validation and formatting

5.3 AI-Based Classification Model

A key component of the system was an intelligent classification engine capable of analyzing website content and determining:

- The primary niche or industry

- The type of website based on content patterns

- The language used

The model was designed to handle diverse website structures and content variations, ensuring high accuracy across different domains.

5.4 Data Extraction Mechanism

We developed advanced extraction logic to identify:

- Author or editor names from visible sections such as articles or about pages

- Contact email addresses from multiple locations within the website

The system was capable of navigating different page structures and identifying relevant data even when not explicitly labeled.

5.5 Email Validation Layer

To improve data reliability, we implemented a validation process that:

- Filters out invalid or duplicate email addresses

- Prioritizes high-confidence contact emails

- Ensures accuracy for outreach purposes

5.6 Bulk Processing Engine

A high-performance processing engine was built to handle large batches of websites efficiently. The system was optimized to:

- Process hundreds of websites concurrently

- Maintain consistent performance across batches

- Handle timeouts and inaccessible websites gracefully

5.7 Output Structuring

The final output was designed to be clean, organized, and ready for use. Each record included:

- Website URL

- Primary niche

- Website type

- Language

- Editor or author name (if available)

- Contact email (validated)

The data was structured in a format suitable for further analysis and integration into business workflows.

6. Implementation Process

6.1 Data Collection and Testing

We tested the system using diverse website samples to ensure it could handle different industries, layouts, and languages.

6.2 Model Training and Refinement

The classification engine was continuously refined to improve accuracy, especially for complex or multi-topic websites.

6.3 Performance Optimization

We optimized the system for speed and efficiency, ensuring it could handle large batches without performance degradation.

6.4 Error Handling Mechanisms

Robust error handling was implemented to manage:

- Inaccessible websites

- Incomplete data

- Unexpected page structures

7. Results and Impact

The implementation of the AI-powered tool delivered significant benefits to the client:

7.1 Increased Efficiency

The system reduced manual effort by over 80%, allowing the client to process large volumes of websites in a fraction of the time.

7.2 High Accuracy Classification

The AI model achieved strong accuracy in identifying website niches and types, improving the quality of insights.

7.3 Reliable Contact Data

Validated email extraction improved outreach success rates and reduced bounce rates.

7.4 Scalability

The solution successfully handled batches of 500 to 1,000 websites, meeting the client’s scalability requirements.

7.5 Structured Data Output

Clean and organized data enabled seamless integration into the client’s existing workflows.

8. Key Learnings

8.1 Importance of Flexible Design

Websites vary widely in structure, so the system must be adaptable to different formats.

8.2 AI Enhances Data Understanding

Intelligent classification significantly improves data quality compared to rule-based methods.

8.3 Data Validation is Critical

Extracted data must be verified to ensure reliability and usability.

8.4 Scalability Requires Optimization

Efficient processing is essential when dealing with large datasets.

9. Future Enhancements

Based on the success of the project, several improvements were identified:

- Enhanced classification for multi-niche websites

- Deeper content analysis for better insights

- Integration with additional data sources

- Improved detection of hidden or indirect contact information

- Advanced filtering and segmentation features

10. Conclusion

This project demonstrates how AI-driven automation can transform the process of website analysis and data extraction. By combining intelligent classification with scalable processing, CnEl India delivered a solution that significantly improved efficiency, accuracy, and usability.

The tool not only solved the client’s immediate challenges but also provided a foundation for future growth and advanced data analysis. As businesses continue to rely on large-scale data, such intelligent systems will play a crucial role in enabling faster and more informed decision-making.