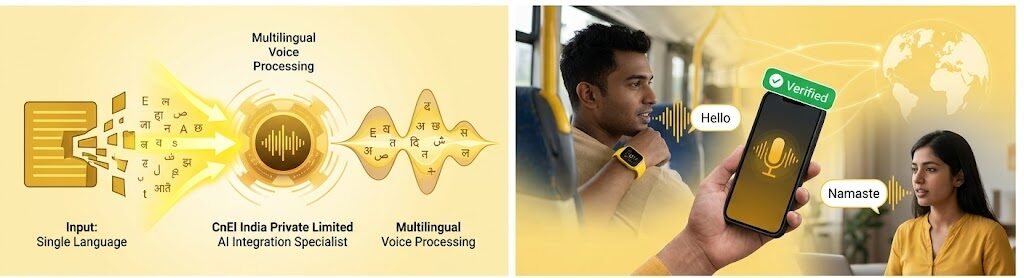

Introduction: Making Voice Technology Truly Universal

In today’s digital world, voice interaction has become one of the most powerful ways users connect with technology. From customer support systems and virtual assistants to healthcare platforms and enterprise operations, voice recognition is transforming how businesses deliver services.

However, one major challenge continues to limit the full potential of voice systems: language diversity.

Most voice recognition platforms perform well in a few major languages but struggle when users speak in regional languages, mixed dialects, or natural multilingual conversations. This creates poor user experiences, inaccurate responses, and operational inefficiencies—especially for businesses serving diverse global or multilingual audiences.

Our client faced exactly this challenge.

They required an experienced AI Integration Specialist to enhance their existing voice recognition system by implementing multilingual voice processing capabilities. The goal was to create a fast, accurate, and scalable voice platform that could understand multiple languages, accents, and speech patterns while maintaining high responsiveness and excellent user experience.

This case study explains how CnEl India successfully transformed a limited voice recognition system into an intelligent multilingual voice processing solution capable of delivering better accessibility, stronger customer engagement, and future-ready scalability.

This project was not just about voice recognition.

It was about helping technology understand people the way people naturally speak.

Client Background

The client operated a service-based digital platform where voice interaction played a major role in customer engagement and operational workflows.

Their system handled:

- Customer support interactions

- Voice-based service requests

- Automated call assistance

- User authentication workflows

- Internal voice-driven operations

While the existing platform worked reasonably well for English-speaking users, performance dropped significantly for users speaking regional languages, mixed-language sentences, and different dialects.

This created serious business problems:

- Misunderstood customer requests

- Incorrect voice recognition results

- Delayed support resolution

- Lower customer satisfaction

- Reduced trust in automated systems

The client needed a multilingual voice system that could perform accurately across diverse user groups without sacrificing speed or reliability.

The Core Challenge

Multilingual voice processing is far more complex than simple translation.

The project required solving multiple technical and business challenges:

1. Language Recognition Accuracy

The system needed to accurately detect and process multiple languages and dialects.

2. Accent and Pronunciation Variation

Users pronounced the same words differently depending on region and language background.

3. Mixed-Language Conversations

Many users naturally switched between languages within the same sentence.

4. Response Speed

Voice systems must respond quickly—delays reduce trust and usability.

5. Integration with Existing Infrastructure

The new AI capabilities had to work smoothly with the current platform without disrupting operations.

6. Scalability for Future Expansion

The solution needed to support more languages and business use cases in the future.

This required both advanced AI implementation and strong system architecture planning.

CnEl India’s Strategic Approach

At CnEl India Private Limited, we approached the project using a human-language-first strategy.

Instead of forcing users to adapt to technology, we designed the system to adapt to users.

Our framework followed five stages:

Understand

Analyze how real users speak

Integrate

Build multilingual AI processing inside the existing platform

Optimize

Improve recognition accuracy and response quality

Validate

Test real-world voice scenarios across multiple languages

Scale

Prepare the platform for future growth and expansion

This ensured the solution worked in practical business environments—not just technical testing.

Phase 1: Voice Workflow and User Behavior Analysis

Before implementing anything, we studied how users were actually interacting with the system.

This included:

- Reviewing existing voice interaction patterns

- Identifying high-failure recognition areas

- Mapping language and dialect usage

- Understanding regional pronunciation differences

- Analyzing mixed-language usage patterns

- Reviewing customer frustration points

- Identifying response delay issues

This step was critical.

Voice systems fail when they are designed for “perfect speech” instead of real human behavior.

Understanding natural communication created the foundation for success.

Phase 2: Multilingual Recognition Architecture

The next step was designing a voice recognition structure that could support multiple languages and dialects effectively.

We built an architecture that focused on:

- Language detection before processing

- Multi-language recognition pathways

- Dialect-aware interpretation

- Accent handling improvements

- Context-aware understanding for mixed-language inputs

This allowed the platform to recognize not just words—but meaning within real speech patterns.

Voice intelligence begins with contextual understanding.

Phase 3: Speech Processing Optimization

Accuracy was the client’s highest priority.

We improved recognition quality by refining:

- Pronunciation handling

- Regional speech pattern recognition

- Context-based word interpretation

- Noise handling for real-world environments

- Voice clarity across different user conditions

- Response correction for common speech variations

This reduced misunderstandings significantly.

A voice system should understand intention, not just sound.

Phase 4: Mixed-Language Conversation Handling

One of the biggest challenges was code-switching—when users switch languages naturally during speech.

For example:

Starting a sentence in English and finishing it in another language.

This is common in real conversations but difficult for voice systems.

We created logic that allowed:

- Smooth multi-language switching

- Context continuity across language changes

- Reduced recognition breakdowns

- Better understanding of natural conversation patterns

This made the platform feel significantly more human.

Users should not need to “speak like a machine.”

Machines should understand how people actually speak.

Phase 5: Real-Time Response Optimization

Voice systems must be fast.

Even small delays reduce trust.

We optimized:

- Processing speed

- Voice input handling efficiency

- Response generation timing

- Low-latency communication between system layers

- Session continuity during active interactions

This ensured the experience felt smooth and responsive.

Fast responses create confidence.

Slow responses create doubt.

Phase 6: Existing System Integration

The new multilingual processing needed to work inside the current platform without causing disruption.

We ensured:

- Seamless integration with existing workflows

- Compatibility with current customer support operations

- Stable performance across departments

- No interruption to live business operations

- Minimal retraining requirements for internal teams

Good integration should feel invisible.

The system should become better—not more complicated.

Phase 7: User Experience Enhancement

Voice recognition is not only technical—it is emotional.

Users trust systems that feel natural.

We improved the experience by focusing on:

- Clearer response handling

- Reduced frustration from repeated inputs

- Better recognition confidence

- Simpler correction flows

- Smoother multilingual interaction

This improved both customer satisfaction and operational efficiency.

Technology should reduce friction, not create it.

Phase 8: Testing Across Real-World Scenarios

Testing voice systems in controlled environments is not enough.

We validated performance using real-world usage scenarios:

- Multiple language interactions

- Accent-heavy speech patterns

- Noisy environment testing

- Fast speech and unclear pronunciation

- Mixed-language conversations

- Customer support simulation

- Operational workflow stress testing

This ensured business reliability—not just technical success.

Real users create real validation.

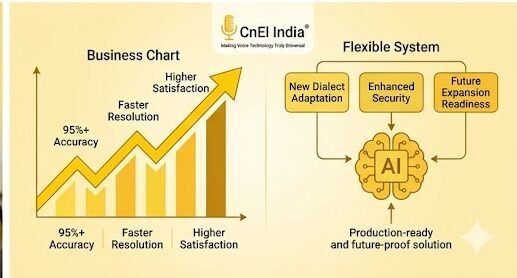

Business Transformation Achieved

The final result was a major improvement in both operational performance and user satisfaction.

Results Delivered

Higher Voice Recognition Accuracy

The system handled multilingual conversations with significantly better precision.

Improved Customer Satisfaction

Users experienced fewer misunderstandings and smoother support interactions.

Faster Service Resolution

Voice-driven requests were processed more efficiently, reducing delays.

Better Accessibility

Users could interact in their preferred language without communication barriers.

Stronger Business Confidence

Leadership gained trust in the platform’s ability to support diverse customer groups.

Future Expansion Readiness

The system was now prepared for additional languages, markets, and business applications.

What Made This Project Different

Many voice projects focus only on speech recognition.

This project focused on human communication.

What made CnEl India Private Limited different:

- Real-user speech analysis before implementation

- Mixed-language conversation handling

- Context-first voice understanding

- Performance optimization with usability focus

- Integration without operational disruption

- Long-term scalability planning

We did not simply improve voice recognition.

We improved how the business listened.

Key Lessons from the Project

This project reinforced several important principles:

Voice Systems Must Adapt to Humans

Users should never be forced to speak unnaturally.

Multilingual Means More Than Translation

Dialect, context, and switching behavior matter deeply.

Speed Builds Trust

Fast responses are part of good user experience.

Real Testing Matters Most

Controlled demos do not reflect real-world behavior.

Integration Should Improve Simplicity

Technology should reduce complexity—not increase it.

Long-Term Value Delivered

The client now benefits from:

- Strong multilingual voice processing capabilities

- Better customer engagement

- Higher operational efficiency

- Improved support performance

- Stronger accessibility across language groups

- Future-ready AI infrastructure

- Greater business confidence in automation systems

Their platform moved from limited recognition to intelligent multilingual communication.

Conclusion

Voice technology becomes truly powerful only when it understands people naturally.

Through strategic AI integration, multilingual speech optimization, and human-centered design, CnEl India Private Limited successfully transformed the client’s voice platform into a scalable multilingual voice processing system.

This project proved that the future of AI is not just smarter technology.

It is technology that listens better.

Because when systems understand language better, businesses understand customers better.